Caution

You're reading an old version of this documentation. If you want up-to-date information, please have a look at 0.10.2.

librosa.feature.tonnetz

- librosa.feature.tonnetz(*, y=None, sr=22050, chroma=None, **kwargs)[source]

Computes the tonal centroid features (tonnetz)

This representation uses the method of [1] to project chroma features onto a 6-dimensional basis representing the perfect fifth, minor third, and major third each as two-dimensional coordinates.

- Parameters:

- ynp.ndarray [shape=(…, n,)] or None

Audio time series. Multi-channel is supported.

- srnumber > 0 [scalar]

sampling rate of

y- chromanp.ndarray [shape=(n_chroma, t)] or None

Normalized energy for each chroma bin at each frame. If None, a cqt chromagram is performed.

- **kwargsAdditional keyword arguments to

chroma_cqt, if

chromais not pre-computed.- Cnp.ndarray [shape=(…, d, t)] [Optional]

a pre-computed constant-Q spectrogram

- hop_lengthint > 0

number of samples between successive chroma frames

- fminfloat > 0

minimum frequency to analyze in the CQT. Default: C1 ~= 32.7 Hz

- normint > 0, +-np.inf, or None

Column-wise normalization of the chromagram.

- thresholdfloat

Pre-normalization energy threshold. Values below the threshold are discarded, resulting in a sparse chromagram.

- tuningfloat

Deviation (in fractions of a CQT bin) from A440 tuning

- n_chromaint > 0

Number of chroma bins to produce

- n_octavesint > 0

Number of octaves to analyze above

fmin- windowNone or np.ndarray

Optional window parameter to filters.cq_to_chroma

- bins_per_octaveint > 0, optional

Number of bins per octave in the CQT. Must be an integer multiple of

n_chroma. Default: 36 (3 bins per semitone) If None, it will matchn_chroma.- cqt_mode[‘full’, ‘hybrid’]

Constant-Q transform mode

- Returns:

- tonnetznp.ndarray [shape(…, 6, t)]

Tonal centroid features for each frame.

- Tonnetz dimensions:

0: Fifth x-axis

1: Fifth y-axis

2: Minor x-axis

3: Minor y-axis

4: Major x-axis

5: Major y-axis

See also

chroma_cqtCompute a chromagram from a constant-Q transform.

chroma_stftCompute a chromagram from an STFT spectrogram or waveform.

Examples

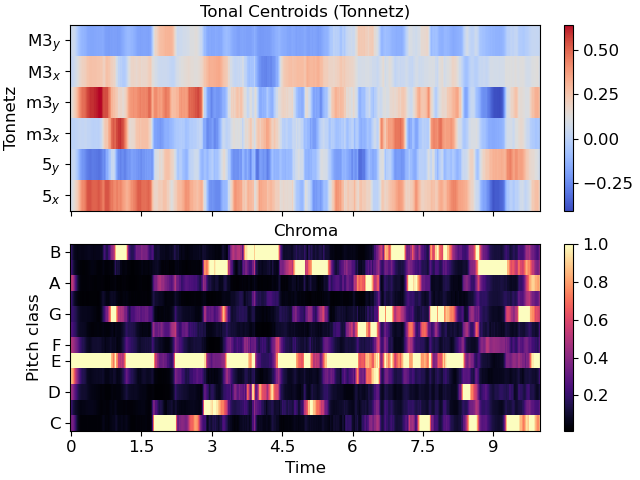

Compute tonnetz features from the harmonic component of a song

>>> y, sr = librosa.load(librosa.ex('nutcracker'), duration=10, offset=10) >>> y = librosa.effects.harmonic(y) >>> tonnetz = librosa.feature.tonnetz(y=y, sr=sr) >>> tonnetz array([[ 0.007, -0.026, ..., 0.055, 0.056], [-0.01 , -0.009, ..., -0.012, -0.017], ..., [ 0.006, -0.021, ..., -0.012, -0.01 ], [-0.009, 0.031, ..., -0.05 , -0.037]])

Compare the tonnetz features to

chroma_cqt>>> import matplotlib.pyplot as plt >>> fig, ax = plt.subplots(nrows=2, sharex=True) >>> img1 = librosa.display.specshow(tonnetz, ... y_axis='tonnetz', x_axis='time', ax=ax[0]) >>> ax[0].set(title='Tonal Centroids (Tonnetz)') >>> ax[0].label_outer() >>> img2 = librosa.display.specshow(librosa.feature.chroma_cqt(y=y, sr=sr), ... y_axis='chroma', x_axis='time', ax=ax[1]) >>> ax[1].set(title='Chroma') >>> fig.colorbar(img1, ax=[ax[0]]) >>> fig.colorbar(img2, ax=[ax[1]])